Is it possible to use technology to tell better stories? Absolutely.

As a content-focused digital agency, Four Kitchens is familiar with how our partners struggle creating the large amounts of content required to keep a website relevant. Sometimes even if they have the resources and capabilities to create large amounts of content, they’re faced with the challenges of keeping the website fresh and usable through proper governance and constant maintenance.

The technologists at Four Kitchens have been fascinated with the advancements in Artificial Intelligence and Machine Learning for years, and we’re always looking for ways to make content management solutions easier and more effective. That’s why at DrupalCon Seattle 2019 we showcased how to use APIs and Machine Learning tools to tell better stories and create content faster and smarter.

We believe our humble Machine Learning experiment can change the way you create and manage content.

Intelligent content for websites

HappyGram.ai takes a short anecdote and automatically serves up potential images to support and enhance it. Imagine you are creating content for your organization’s website and you are automatically presented with image options that perfectly represent portions of the text. Imagine how you could boost readership through strong visualization tactics and the time saved for your editorial team.

It’s an exciting point in history and the future of machine learning is full of possibility. At Four Kitchens, we intend to harness this powerful technology to make our partners more effective and efficient. We make content go!

HappyGram.ai: A Drupal website that thinks with you

HappyGram.ai is a product that brings together the visual elements of storytelling with words you provide. We do this through multiple sources working together behind one editing experience:

- Google Natural Language API

- AutoML Model

- Unsplash (Free stock photography database)

- Drupal

Tell us about your happy moment

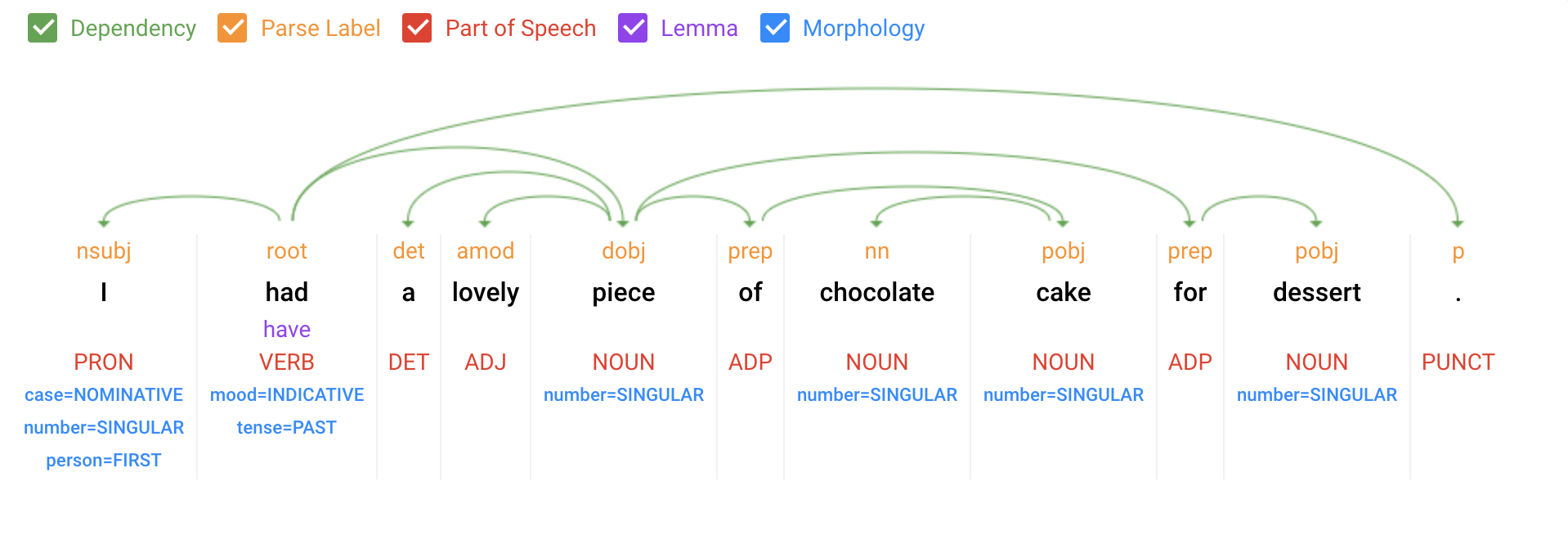

HappyGram.ai is centered around an editing experience built in Drupal in which humans share a happy moment from their lives, spoken or typed in concise, natural language, and then submitted for processing. The text of the anecdote is submitted to Google's Natural Language API, which breaks down the text and provides us with an array of all the words and conjunctions therein. Each of these "syntax tokens" contains references to what part of speech it should be and what its root word is.

Processing and parsing your words

It was then our job to take the language entities parsed by the Natural Language API and turn them into meaningful search phrases for Unsplash’s photo database. This took some tweaking and experimenting, but over time, we discovered that taking the full list of parsed entities (nouns) in order of salience, and seeing if any of them have a direct verb root token to pair it with returned decent results.

This is repeated with adverb and adjective lookup as appropriate, and the final list of noun phrases is saved. Next, we get a list of verbs and lookup for each one that uses them as a root token that isn't a verb, pronoun, or conjunction and combine the ones that fit. This final list of verb phrases is merged with the noun phrase list, giving us a flat set of photo searches to attempt in a salience order. Photo search phrases that don't return anything are not presented to the HappyGram.ai user.

Categorizing your happy moment

The AutoML Model helped us identify and apply preset categories to the happy moments we were collecting. As more and more happy moments were collected and processed, this model became more accurate in how it applied categories.

Applying machine-taught photo enhancements

As the final step, we applied filters and photo enhancements to each user’s “HappyGram” based on two criteria: category and sentiment value. Each of the seven categories (achievement, affection, bonding, enjoy the moment, exercise, leisure, and nature) was represented by a specific Instagram-like filter. We boosted and brightened colors for positive sentiments, and toned down color for negative sentiment.

The outcome looks something like this, a meme-like visual story that’s personal, yet built by robots: